The iTunes store continues to grow. The data that Apple published in the last event included the following:

- 15 Billion iTunes song downloads

- 130 million book downloads

- 14 billion app downloads

- $2.5 billion paid to developers

- 225 million accounts

- 425k apps

- 90k iPad apps

- 100k game and entertainment titles

- 50 million game center accounts

As this data is added to the existing data and cross-referenced additional insight into the economics of iTunes is emerging.

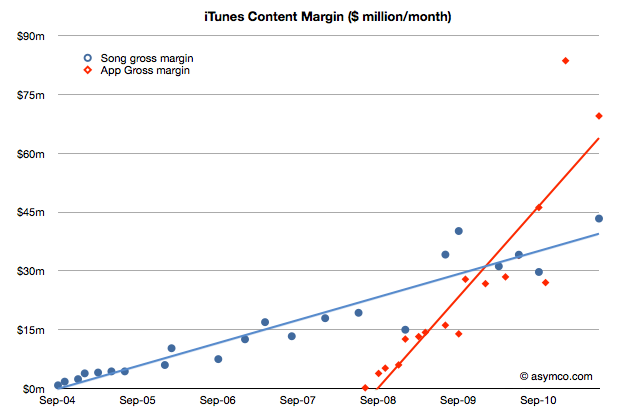

Since we know something about the average price of songs and apps, and we know the split between developers and Apple (and roughly between music labels and Apple) we can get a rough estimate of the amount Apple retains to run its store.

The following chart shows iTunes “content margin” by month. This margin is what Apple “keeps” after paying content owners but before paying for other costs like payment processing and delivery/fulfillment which should be accounted as variable costs. Strictly speaking this margin is not “gross margin”.

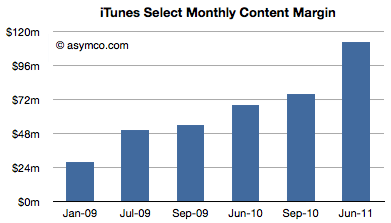

If we add the content margins from music and apps and assume the store runs at break even we can get an idea of what it costs to operate the store. The latest number is $113 million per month (from a total income of $313 million/mo.). It implies over $1.3 billion per year.

Much of that cost does go into serving the content (traffic and payment processing). Some of it goes to curation and support. But it’s very likely that there is much left over to be invested in capacity increases.

I would like to hear alternative opinions, but my guess is that much of the capex that went into the new data centers Apple built came from the iTunes operating margin.

Discover more from Asymco

Subscribe to get the latest posts sent to your email.