You’ve probably heard of Jony at Apple but probably don’t know about Johny.

Jony is a celebrity executive known as the face of Apple Design. Johny is the executive in charge of custom silicon and hardware technologies across Apple’s entire product line.

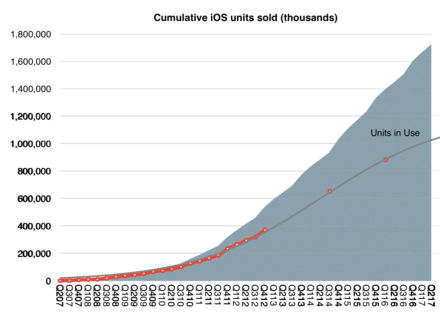

Under Johny’s leadership, Apple has shipped 1.7 billion processors in more than 20 models and 11 generations. Currently Apple ships more microprocessors than Intel.1

The Apple A11 Bionic processor has 4.3 billion transistors, six cores and an Apple custom GPU using a 10nm FinFET technology. Its performance appears to be almost double that of competitors and in some benchmarks exceeds the performance of current laptop PCs.

A decade after making the commitment to control its critical subsystems in its (mobile) products, Apple has come to the point where is dominates the processor space. But they have not stopped at processors. The effort now spans all manners of silicon including controllers for displays, storage, sensors and batteries. The S series in the Apple Watch the haptic T series in the MacBook, the wireless W series in AirPods are ongoing efforts. The GPU was conquered in the past year. Litigation with Qualcomm suggests the communications stack is next.

This across-the-board approach to silicon is not easy or fast or cheap. This multi-year, multi-billion dollar commitment is rooted in the Jobsian observation that the existing supplier network is not good enough for what you’re driving at. Tiny EarPods, Smart Watches, Augmented Reality, Adaptive Acoustics require wrapping your arms around all parts of the problem. The integration and control it demands are in contrast to the modular approach of assembling off-the-shelf components into a good-enough configuration.

There are times and places where modules are adequate and times and places where they aren’t. The decision depends on whether you are creating new experiences or new “measures of performance” vs. optimizing for cost within existing experiences or measures of performance.

The very notion of a microprocessor is a rejection of the discrete component designs that preceded it. Earlier computers had central processors made up of many discrete components. VLSI stands for Very Large Scale Integration with emphasis on Integration. As computing has progressed toward ambience and ubiquity the idea of using discrete components became normative again but that was not considered sufficient by Apple.

So while the “Silicon” in Silicon Valley has come to be seen as an anachronism, silicon development today means competitive advantage. The only problem is that it takes years, decades even to establish competence. The same duration that it took for the building of Apple as a design-centric business fronted by Jony Ive.

Apple also now needs to be understood along the dimension of silicon-centric engineering as led by Johny Srouji.

- Trailing 12 months’ PC shipments 265 million. Equivalent iOS devices 281 million. Not included are Apple processors in Apple TV. [↩]

Discover more from Asymco

Subscribe to get the latest posts sent to your email.